P5.js | Javascript | Librosa (MIR) | PyAudio

In this project I investigated real time Music Information Retreival, especially identifying chords from the computer microhpone in a browser

Buffers of audio were captured from the device microphone (using PyAudio) and processed individually (using Librosa). Each audio buffer was then used to extract a chromagram (12 dimensional representation of the folded energy across octaves for each chromatic note).

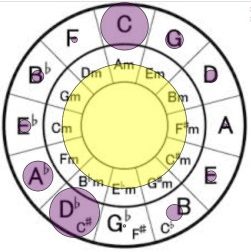

Using P5.js I then visualized this chromagram on a circle of fifths. Here is a video of the demo on a pre recorded song played at the microphone. The purple circles on the outside represent the chromagram energy and the yellow blinking circle in the middle is the estimated downbeat (constantly estimated from previously played audio)

Chord Detection on the Browser:

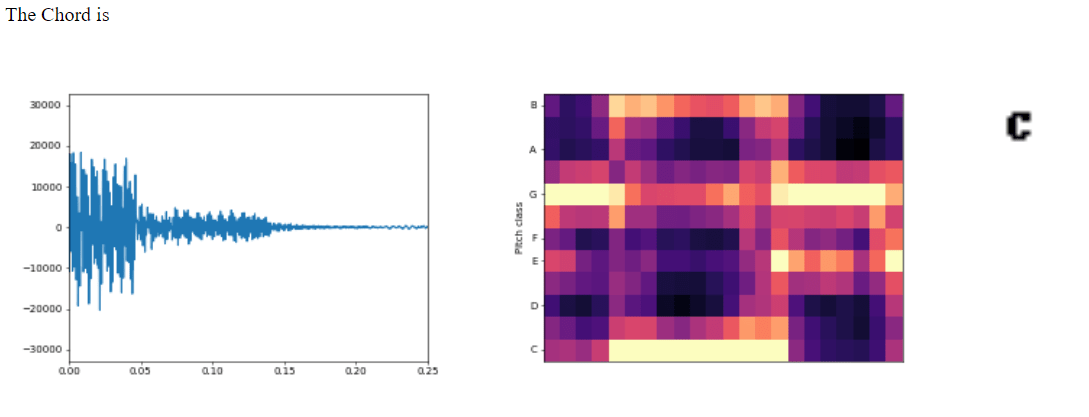

I then continued to match the chromagram to common chord template chromagrams to predict the Chord Name that was being played. As a guitar player I wanted a tool to quickly tell me the names of the chords I played at my browser .

Here is a screenshot of the browser as I played a Cmaj chord on my guitar.

Will upload an interactive browser based demo soon.

Some More Info:

My experiments with this project stemmed from my investigating Automatic Chord Estimation Algorithms. I found 3 main standard implementations of benchmark methods across the last decade –

- Essentia/Librosa (basic template matching from chromagram obtained from Constant-Q) [Ref]

- Chordino – VAMP plugin (NNLS chroma obtained from template matching with 88 templates for each tone followed by a dynamic bayesian network Language model to predict chord names) [Ref]

- Madmom – ( a simpler implementation of the 3 layer DNN to obtain chromagrm-like vector from spectrogram followed by a conditional random field language model to predict chord names) [Ref]

Here is my Github link with implementations for all the three methods above in Python. (Will be adding complete documentation soon) – [link]