This is my documentation for work done in the class “Arts@ML” with Tod Machover at the MIT Media Lab (Fall 2020)

Through the class we had discussions about the arts, its role in our lives, its role in time and the different ways the answers to these questions are evolving. We engaged in really interesting conversations with expert curators, musicians, artists, activists and studios across the world. More information can be found here.

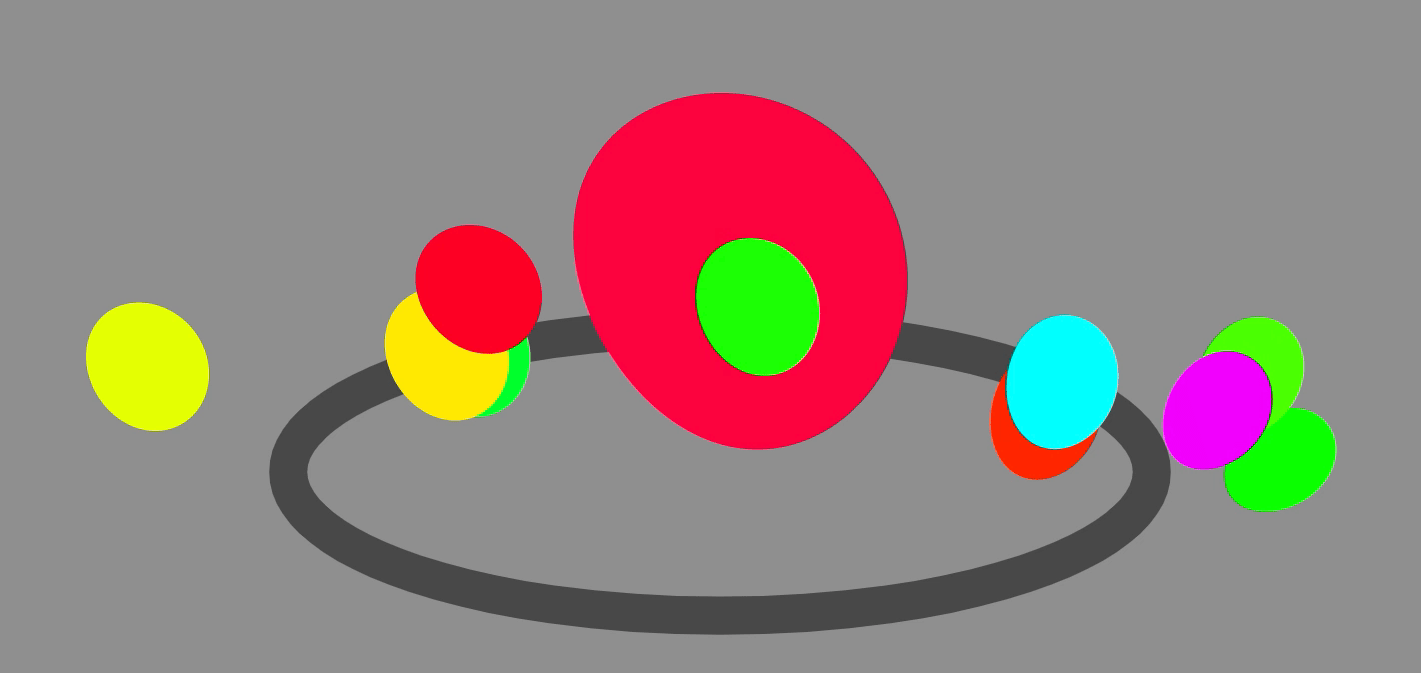

Final Piece (Blob Party)

I livestreamed a performance of the Blob Party (with the help of some human and algorithmic friends).

More details regarding this project can be found at the bottom of this documentation.

A.I. in Music Making

Through this class I looked at A.I. in music making and worked on multiple projects wrapped in that theme.

- Introductions

In the beginning of the semester we were asked to create small pieces to introduce ourselves to the rest of the class. I created this A.I. music piece where I used tools from Magenta ( Onsets and Frames and GANSynth modeling) to generate all the sounds from a recording of my own voice from 1995 when I was 4 years old.

I also used a joystick as a metaphor of a controller that takes us from human audio to machine generated audio. I am very interested in thinking about machine generated art as being curated, controlled and moulded by the human wielding the joystick.

I also created another visual component to my introductions using deep learning based first order motion models to control images from different decades of my own life (childhood, teenages, present and hypothetical future).

I called this Puppeteering Myself – and thought more about human controlled, machine generated art with the focus on taking responsibility and ownership of not only the outputs produced but also on the source material used in such models.

2. Standing on the shoulders of giants

By the middle of the course we were asked to study inspiring Media Labbers of the past and I along with some of my peers chose to study and share the ideas of Marvin Minsky.

Marvin is well known as one of the father’s of A.I. and was also an incredible improvisatory musician. We created a website to explore Marvin’s ideas through small vocal snippets from different interviews in an interacitve image of his famous living room. This room is full of objects and with them stories of his life and legacy.

Link to the interactive room: https://minsky-room.glitch.me/

3. Imagining the Impossible

We also imagined what could be a possible museum exhibit that would be impossible to present in any current form of museum.

I presented “Lost in the conversations of a Forest” which would be an exhibit presented in forests across the world. It involved tuning into the conversations of the trees of a forest that are spread across space (micorhizzae networks) and spread across time (seasons). I also created the accompanying concept video to simulate humming voices as the conversations in the forest.

4. Blob Party – Musical Organisms

Blob Party inspired by block parties is about reclaiming space both physical and sonic in a celebration. In my experiments about human controlled – machine generated art, I have been also thinking about reclaiming responsibility. Though most A.I. art is presented as a computer generated pastiche or imitation of a style, I am interested in the future of human-machine partnership in creativity.

With these ideas I created Blob Party – a browser based musical experience where each person controls a blob that listens to your microphone. You can control the blob and its (physical and sonic) behaviors with sounds from your voice or instrument. The performance culminates in a dance floor where any person on the internet logged in at the time can see each other’s blobs as they respond and react to each human controlling them.

This resulted in Blob Party. I shared this party with some of my friends across the world and recorded some of the performances. Here is a short demo describing what it looks and sounds like.

All the sounds including the beats in the background are all generated algorithmically (using Tone.JS and Gibber.JS) without any pre-recorded samples. The parameters of the music including samples triggered are all generated on the fly based on the interactions and activity of your blob. The more active your blob the more variations in the sounds.

I am looking forward to organizing more Blob Parties and adding more dimensions of interactivity and range of musical output in the next editions.

Thank you to my wonderful classmates and Prof. Tod Machover for really inspiring conversations, ideas and for supporting everyone’s growth on their own terms.