This is my documentation for my class “Sound: Past and Future” with Tod Machover at the MIT Media Labs (Spring 2020).

Through the class we studied various radical composers, met with the unprecedented corona lockdown midway and finally finished the class in a glorious zoom call performance session. Everyone in the class finished by created tremendously thought provoking projects / performances / ideas and I am thankful for the opportunity to be amongst them.

Final Piece:

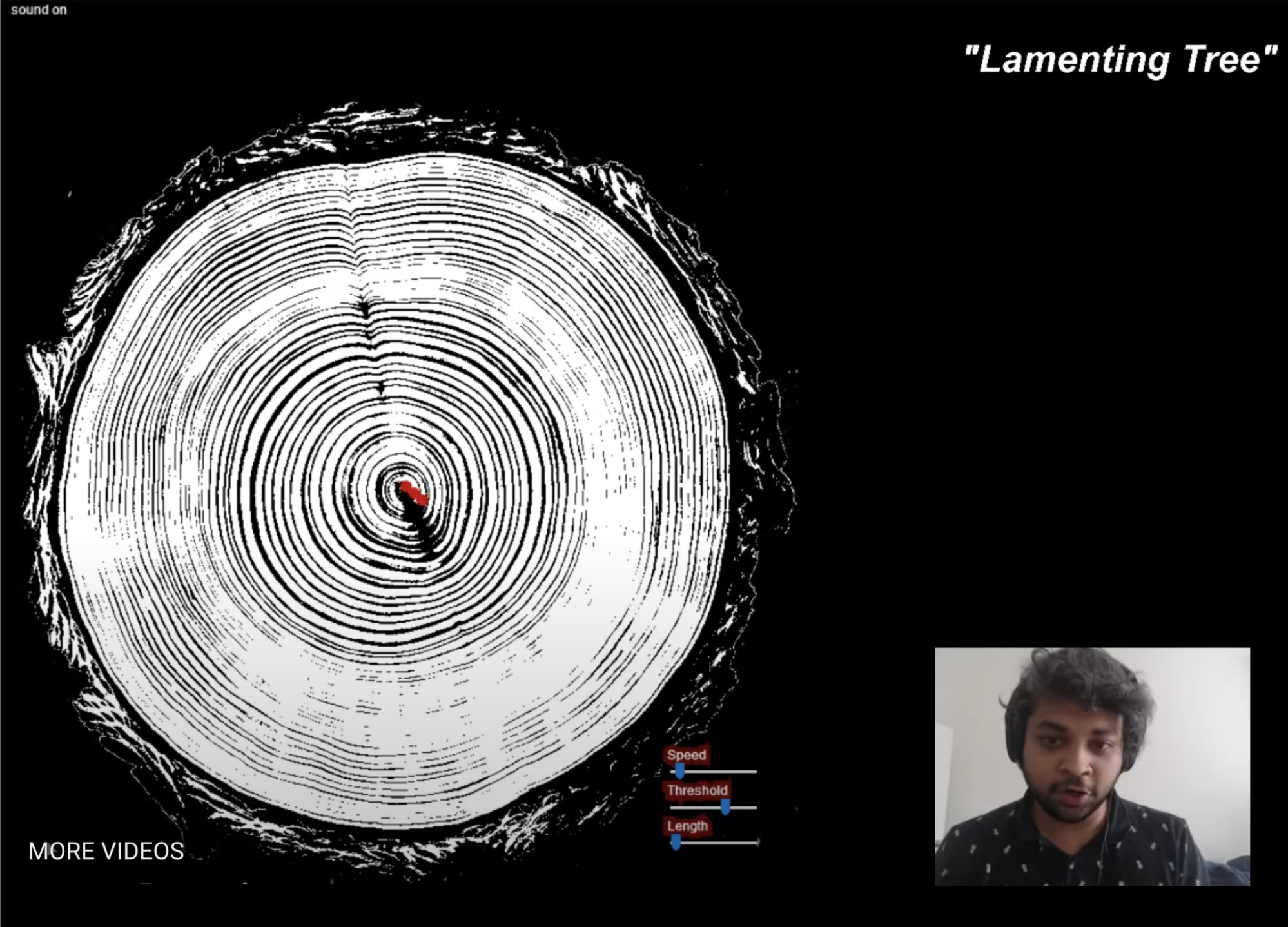

I livestreamed a performance of my piece “Lamenting Tree”

It was an image to sound synthesizer on a circular gramphone style representation of cross section of tree trunks. The sounds were generated from the pixels of the tree trunk rings, low frequencies at the center to high frequencies at the outermost points. I dynamically controlled the speeds, ranges and qualities of the sounds. I created the entire system in javascript (P5js and Tonejs).

I enjoyed creating massive sounds with multiple band passed noise filters to represent the lament of a tree cut from the trunk.

Motivations:

I have been fascinated by tree trunk images. Apart from the somber reminder of a dead tree, a tree trunk is like a biography of the individual tree. Tree trunk rings not only represent the age and species of the tree, but are often used to produce a rich historic weather report. Markings can indicate drought-filled time periods and strong wind directions. Changes in thickness and asymmetry can indicate climate changes over the long periods of 30-100 years with annual markings. Scars indicate a survival of forest fires and increased spacings represent late stunted summer growths. I knew I wanted to use images of tree trunk rings to re-tell the stories through the lines and cracks that reminded me so much of the grooves on a vinyl LP.

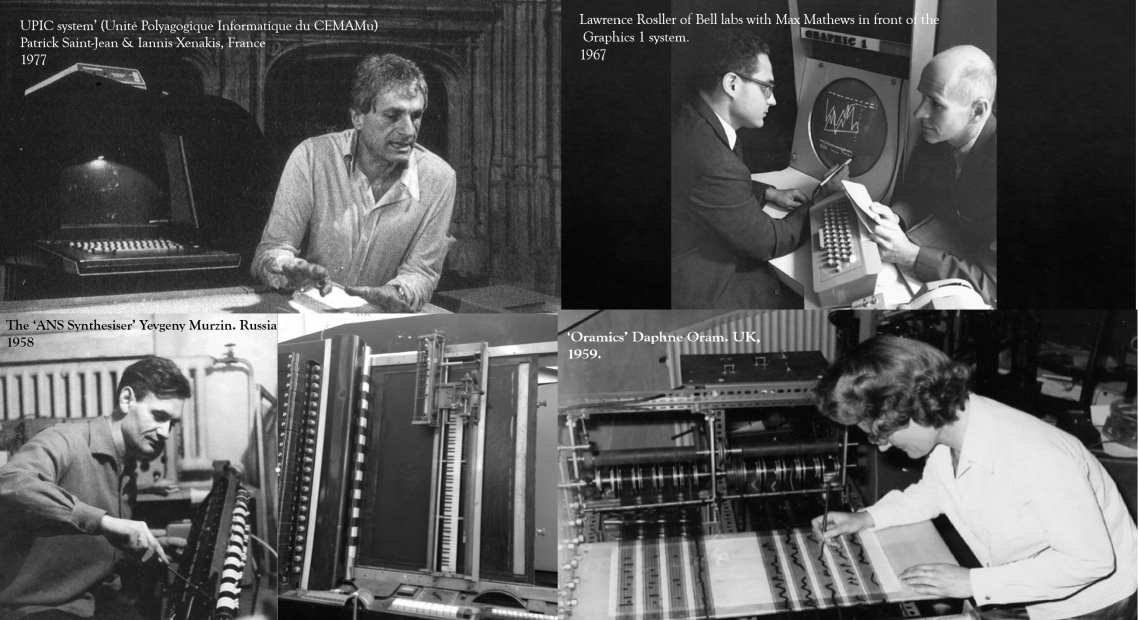

Images have been an inspiration for sound synthesis from the late 1930s. Though I was directly inspired by the ANS Synthesizer here are a list of major visual based synthesis models that motivated my work.

Though there are several digital recreations of many of these systems, I chose to re-interpret the system as a performative instrument rather than a static aural version of an image. I do not wish to convey that the sounds from an image are “the real” sound of the image. A sonic mapping of an image (or any other data) is merely the result of choices made by the human performer and those built into the system architecture and I think it is important to present that distinction.

The sounds in my “Lamenting Tree” are created from two components of the system – the underlying image and the scanning line. The underlying image can be dynamically controlled with a threshold dial to allow more or less of the image to be mapped into sound. The scanning line determines the location of the image that is currently being sonified. The length of the scanning line and the speed are also controlled during the performance. The sounds are generated from 30 band passed noise synthesizers (Tonejs) . The center of the scanning line represents the lowest frequency bands and the outermost point corresponds to the highest frequencies. As the scanning line moves over the underlying image, the pixels of the image on the line represent the amount of sound contributed from the corresponding band passed noise signal.

I also performed a non circular version of my system with several underlying image textures at an online algorave concert. This event was performed in VR by multiple performers all over the world.

Assignments through the Class:

In the first week we studied the main radical composers post World War – II, Stockhausen, Boulez, Xenakis and Cage.

I created a 1 minute piece inspired by the atmospheric electronic sounds of Stockhausen and the chance processes of John Cage. I was also reading John Cage’s Silence and thinking about how I was adding noise to an already sound polluted world.

Here is my piece “People are Noise”

In the following second section of composers we studied more multi-modal radical thinkers like Maryanne Amacher , Anna Thorvaldsdottir and Christine Sun Kim. I was specifically struck by the multi sensory phenomena explored by Christine Sun Kim’s work. We were tasked with creating a piece that had a visual component (either to accompany the aural or to help understand and decipher the piece as a graphic score).

I decided to go with a completely visual approach where the music was within the visuals leaving the sonic pallete on mute. I planned for this to be seen on a large screen in a dark room so that the visual element would be imposing and force the audience to hear with their eyes.

Here is my piece “Muted”

Thank you to my wonderful classmates and Prof. Tod Machover for really inspiring conversations, ideas and for supporting everyone’s growth on their own terms.