When I learnt about PoseNet I really wanted to use it for a visual installation. I was going to be at an audio visual festival in Bangalore, and I thought that was my opportunity to test out the first prototype in that direction.

I have not yet entered the territory of sketching (digital or otherwise) and often have to rely on geometric shapes for visualizations. At this time, I was also drawing huge inspiration from Google’s quickdraw dataset which is a large collection of people doodling different shapes with the stroke information. It was a wonderful experiment that resulted in a beautiful repository of people’s casual doodlings of different words – buses, guitars, eyes, ships etc. (50 million drawings across 345 categories). There are a bunch of generative projects that have drawn inspiration from this and so have I for many experiments. I knew exactly what I had to do to visualize my PoseNet experiments.

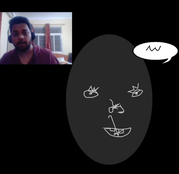

I use pose net’s JS library to detect key points from the camera, and then I randomize a doodle from the quickdraw dataset for – nose, eye, mouth, to constantly re draw the identified face with people’s doodles. The concept was that every person that walks in front of the camera is instantly detected and re generated using multiple people’s perceptions of them which is constantly changing.

Interesting Lesson: In this experience I learnt a very interesting behavior of the participants. When I was representing the detected eyes and nose positions on a mirror like display, people would constantly try to trick the detection. Move really fast or try to run into the frame from random directions. PoseNet was up to the challenge but the reaction from the participant’s was one of – “I’ll get you next time, algorithm”.

The next day of the experiment, I replaced the mirror like view with a completely black screen and just displayed the representations of the eyes and nose when detected. This time people were constantly surprised and impressed by the fact that the algorithm could find them. The reaction from the participants was always one of wonder!

I observed that being presented with information in an obvious way, where the task at hand (detecting of the eyes and nose) is easily accomplishable to the participant’s own mind, led to a disregard of the algorithm as performing poorer than obvious. While when presented with incomplete information, the participants relied on the algorithm to reveal instead of competing with it which was a much more rewarding experience.

I find it fascinating to think about the culture that gets built around automation and algorithms as they become actively a characteristic of our surroundings.